Platform¶

Important

In many cases, you deploy only channels and pipelines onto a platform that you already have. The punch runs fins on any cloud, Saas/Paas. There, you are likely to have your Elasticsearch, Kafka, Spark and others already managed for you. If you do not have such a platform, the punch brings one to you. If you have one, this chapter is still relevant, and the punch still provides great features to monitor your various components.

Deploying, running and maintaining a small or big data platform, with or without artificial intelligence, is not easy. It is key to clearly differentiate what relates to the system (hardware/network/OSes) from the platform software stack (Spark, Storm, Elasticsearch) from the business logic implemented on top it.

The platform refers to the various software components you deploy on your system, in charge of hosting your business logic. On a punchplatform your compose your platform according to your needs.

Say you need a log management backend, you are likely to deploy an Elasticsearch, some Kibana, and a parsing components made of Storm and (maybe) Kafka. If instead you need a machine learning platform, you will install a Spark cluster as well.

Whatever you do after your deployment phase, you end up with what we refer in this documentation with a platform. It runs nothing, there are no business logic implemented on top of it yet. But it is running, monitored, and last but not least, ready to be easily upgraded.

This chapter describes these platform concepts in more details.

Deployment¶

An easy way to quickly understand the value, and the concepts of the (punch)platform is to understand how you deploy it.

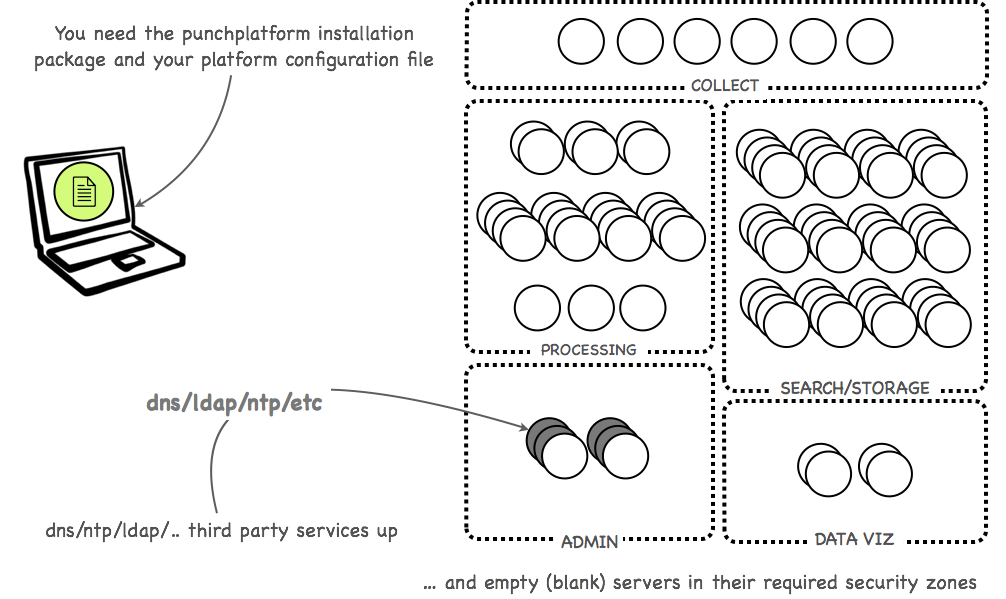

You start from empty up and running servers. These can be hosted on dedicated hardware, on clouds, as docker/kubernetes, whatever. To install a platform you simply need one package that delivers all the required applicative components, and a configuration file that describes what you want.

This is illustrated next, for something that looks like a real-life cyber-security platform with log processing, indexing and archiving in a highly secured environment.

Note that a punchplatform can be much simpler and consists on a single server. We use this example for you to understand what you can possibly build with the punch.

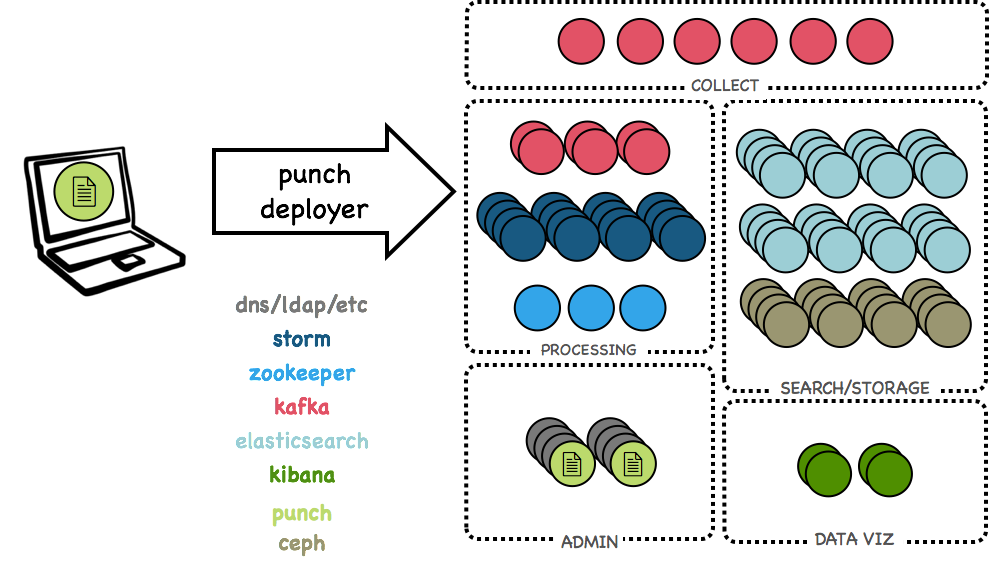

From that starting point, you simply run the punch deployer, a tool provided with the punchplatform package, that will install everything. This is illustrated next:

At this stage you have no business logic yet. But your platform is ready to host some.

Functional View¶

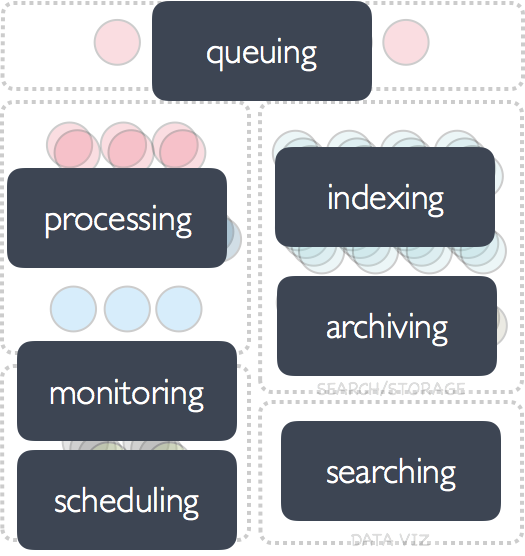

The previous picture gives a technical view of a platform. Here is a more functional view:

If you think about it, with these functional patterns you can implement many (many) uses cases. These are all generic and powerful, simple, and orthogonal patterns. The ones you need in virtually all applications you can think of. This is why the punchplatform is used in production for :

- cybersecurity platforms

- stream event processing use cases

- data intelligence

- industrial system monitoring

Monitoring¶

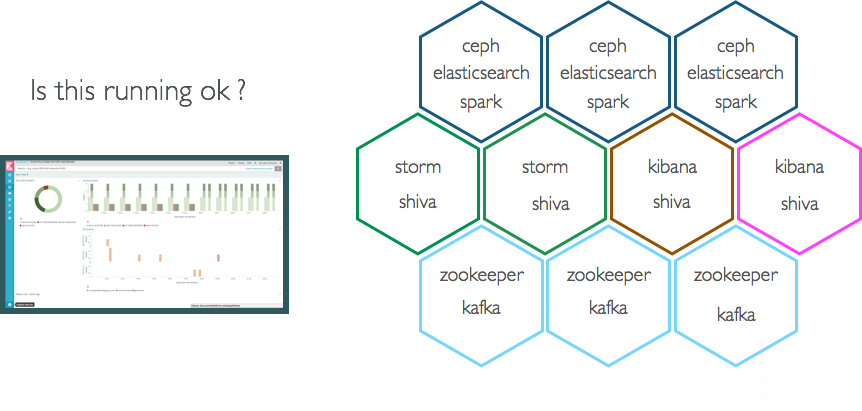

Your platform is now deployed. Do not go production without first class monitoring. The question is : how do you do that ?

The punchplatform automatically takes care of monitoring the inner components, and keeps an updated global health status. This status is in turn exposed using a REST api. All you have to do is to plug in your favorite supervisor to periodically get that information and report alert should your platform be yellow or red.

Important

let us insist : this chapter is about platform, not business logic. This health status reflects the proper health of the various components you deployed : Elasticsearch, Kafka, Zookeeper, etc.. It will not tell you that a business component is malfunctionning. The punchplatform has business level metric and monitoring as well, but that is separate and complementary from the platform monitoring plane.

Upgrade¶

You have your application up and running. One year passes. The new version of Elasticsearch, Kibana, Spark, Punch machine learning are available with great new features. How do you upgrade you platform ?

This is an important service. If you have real-life experience with running big data platforms, you know how difficult it is to perform a live update without service interruption. Even on top of cloud managed services.

Updating a platform encompasses both the platform itself (as just explained) and the business components. (This is covered in channels).

The ability of the punchplatform product and team to help such live update relies on various punch features : centralised configuration management (git, zookeeper), deployment tooling, dynamic monitoring and functional components deployments, etc.

Even with the packaged deployment of integrated open-sources in the punch deployer, major upgrading may be still a multi-staged complex process, due to the various upgrade sequence of the underlying open-source components clusters, and the desired availability of business applications during most of this upgrade. For this, the punch team, and the custom solution integrator can cooperate to build a specifically optimized upgrade process.

For minor updates or patches (where the underlying open-sources, and punch specific components allow a 'hot-restart' of applications and daemons with no significant configuration change) the process is much simpler, often relying on a scheduled one-node-at-a-time components restart sequence, driven by the upgrade team.